A few months ago I posted a blog on deploying a BDC using the built-in ADS notebook. This blog post will go a bit deeper into deploying a Big Data Cluster on AKS (Azure Kubernetes Service) using Azure Data Studio (version 1.13.0). In addition, I’ll go over the pros and cons and dive deeper into the reasons why I recommend going with AKS for your Big Data Cluster deployments.

If you’d like to watch the video version (with a lot more explanation) feel free to watch the video below:

BDC Deployment Options

According to the Microsoft documentation, there are two ways to deploy a Big Data Cluster:

- Kubeadm

- AKS

I’ll go into each and list the pros and cons.

Kubeadm – If you want to host your Kubernetes cluster on-premise then this option is for you. You will have to deploy your own Kubernetes cluster with a minimum of 64 GB RAM on each host/node. The pro to this option is that you are in total control of the underlying Kubernetes cluster. The con is that you are in the control of the Kubernetes cluster. :) You will be in charge of not only the worker nodes but the master node. You will need to ramp up on your Kubernetes administration skills. It is doable, just a bit of a steep learning curve for the DBA, or data professional, who’s looking to get their feet wet with BDCs.

Azure Kubernetes Service (AKS) – This option allows you to deploy a Big Data Cluster on a managed Kubernetes cluster in Azure. The pros are many. From a Kubernetes Admin perspective, you only have to worry about managing and maintaining the worker nodes. The master node is managed by Azure. You choose the VM (size) and node count before deploying the AKS cluster. The rest is taken care of. You do not have to worry about a shortage of RAM, CPU or even storage. The only con that I can think of is cost. If you are a small business or an individual who is looking to learn BDCs then you want be cautious of the costs associated with deploying multiple virtual machines, etc. Azure does offer a free 30-day trial and $200 credit that you can use to learn how to deploy a BDC. I *highly * recommend using that to get your hands wet. That is plenty of credit to learn the deployment process. (I created a 4-part series on deploying a BDC that provides all the links to get started. Check out the series here.)

Why You Should Go With AKS

In a nutshell, ease and convenience. In terms of networking, storage and management, AKS is the way to go. For example, if you build a Kubernetes cluster on-premise you will have to configure the storage before hand. The beauty of going with AKS is you do not have to worry about it. AKS does all the storage on the back end utilizing Azure Disks or Files. If you’d like to learn more, feel free to read my blog on why I think you should go with AKS with deploying a Big Data Cluster.

Deploy a Big Data Cluster on AKS Using Azure Data Studio

The video will walk you through deploying a Big Data Cluster on AKS using Azure Data Studio. If you haven’t watched my previous video on setting up your base machine, please watch that first as you will need that to proceed.

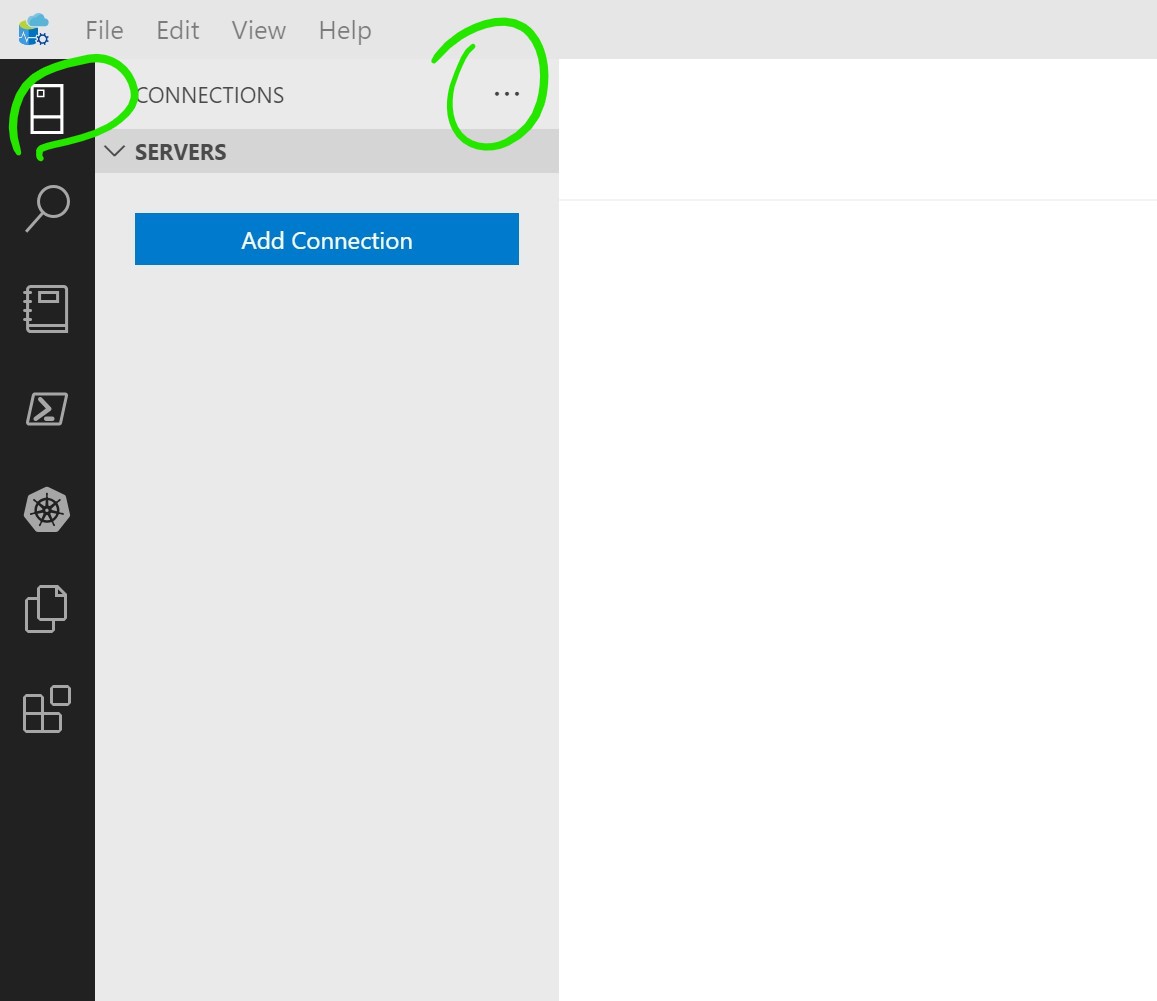

First, open Azure Data Studio from your base machine. Click on the connection tab, the “more action”, then “Deploy SQL Server” as shown in image below:

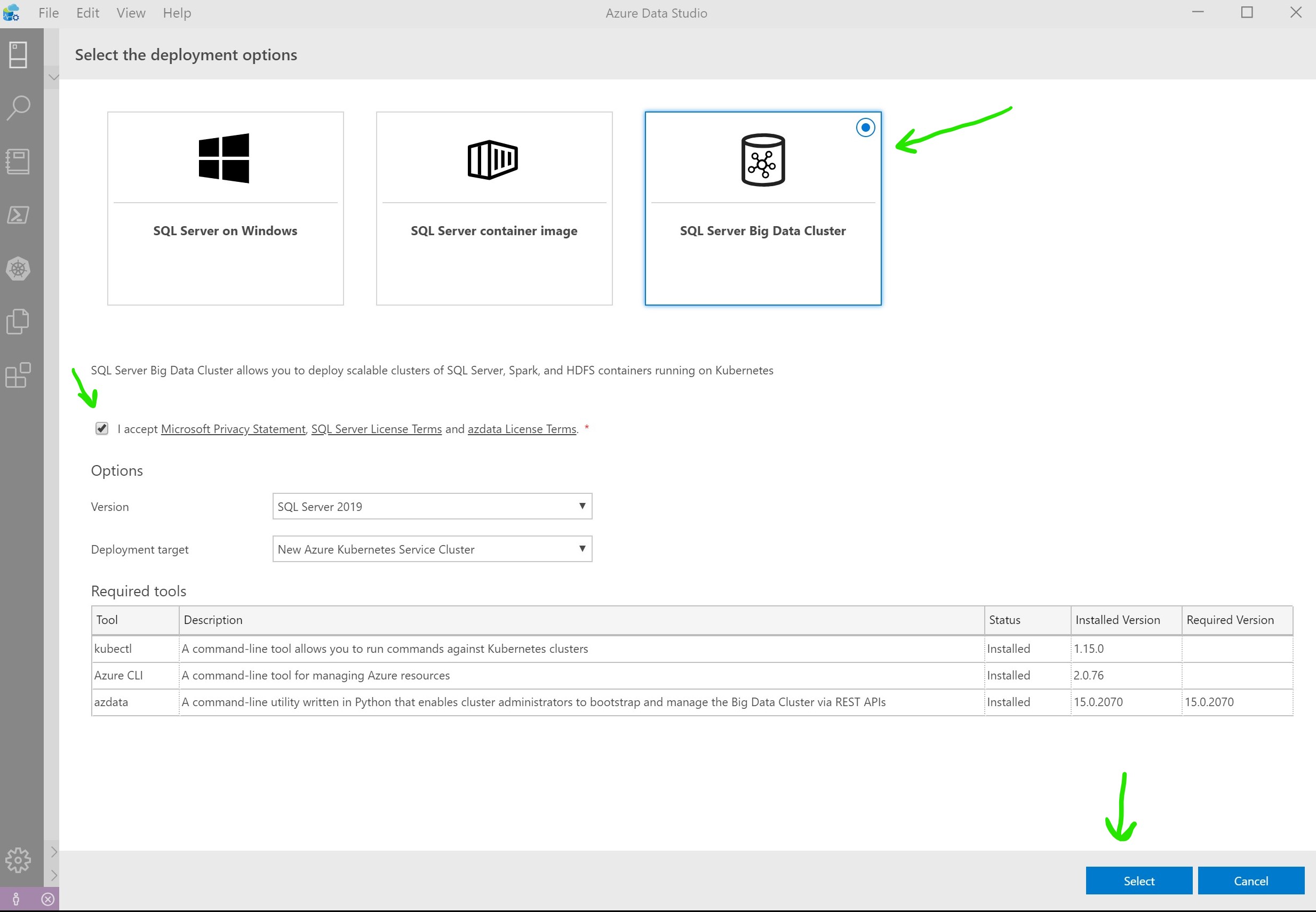

Next, choose “SQL Server Big Data Cluster” option, check the “agree to license terms” and click Select as show below:

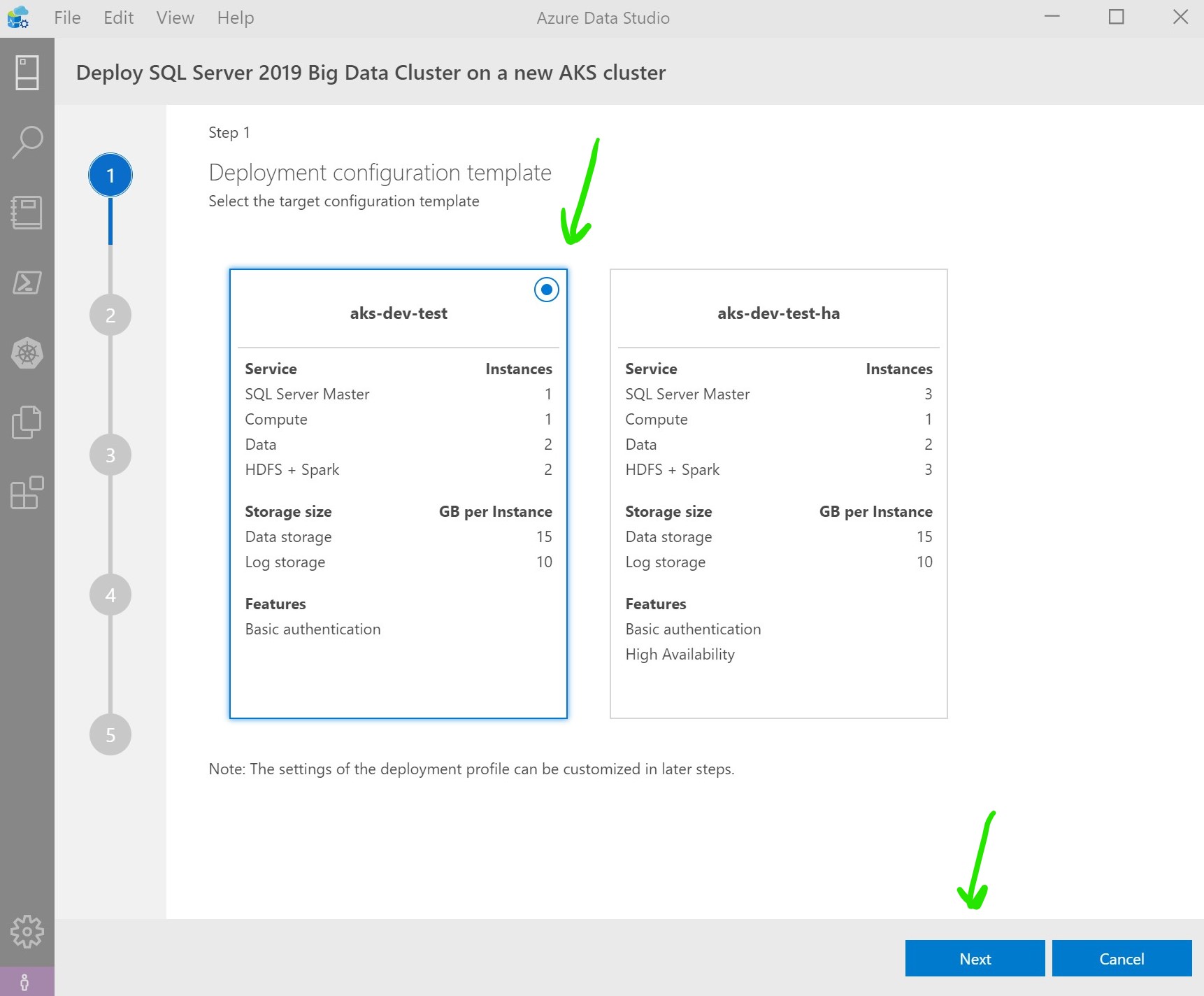

This will bring up a 5 step guide. The first step has two options. First option is “aks-dev-test” and the second option is “aks-dev-test-ha”. The “ha” option is if you wanted to include high availability with your BDC. For the sake of this blog post we will stick with the NON ha version. Leave everything as is and click Next as show in the image below:

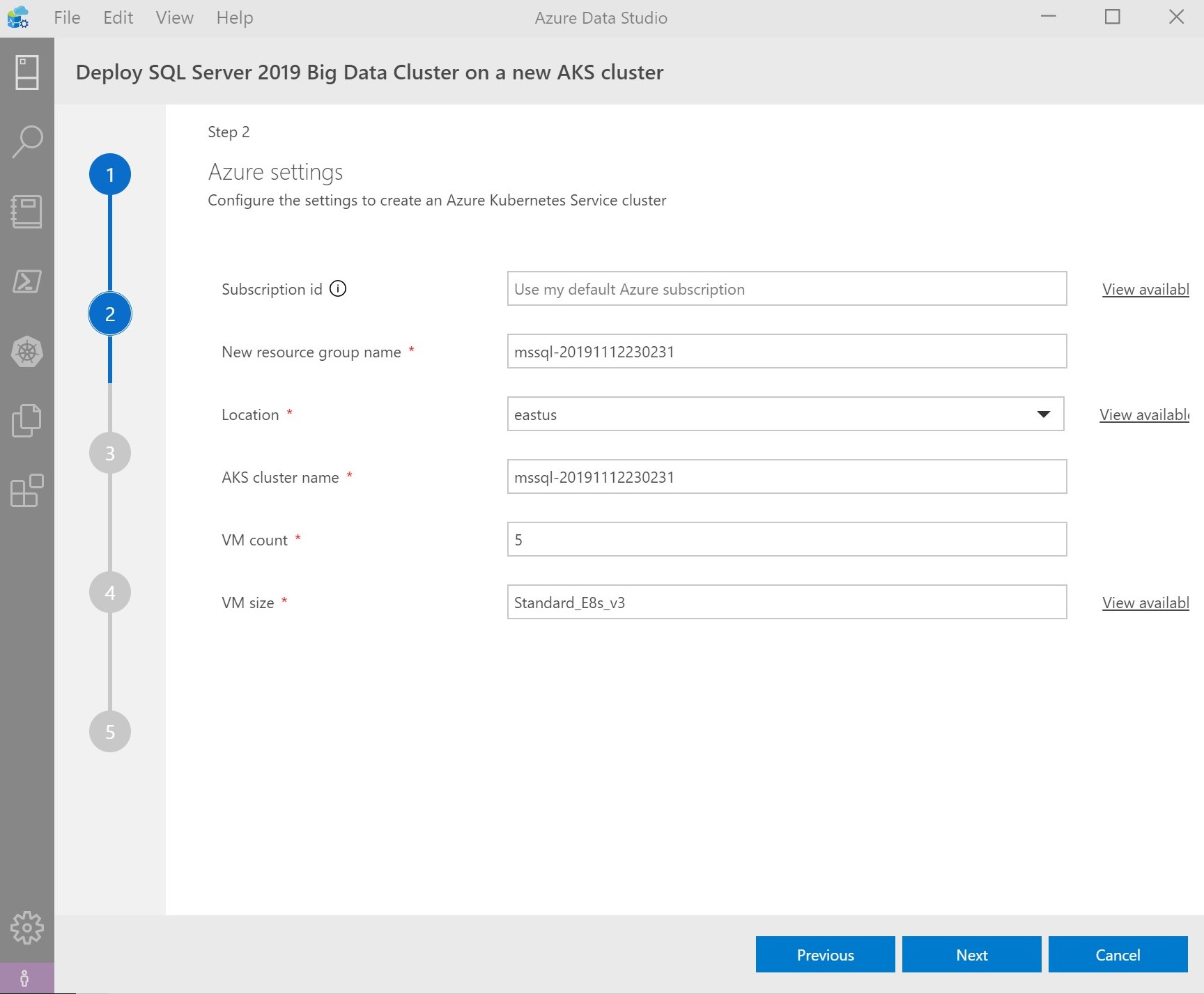

The second step is the Azure settings. What region, VM size, count and AKS cluster name would you like. I will leave everything as default values and click Next as show in the image below:

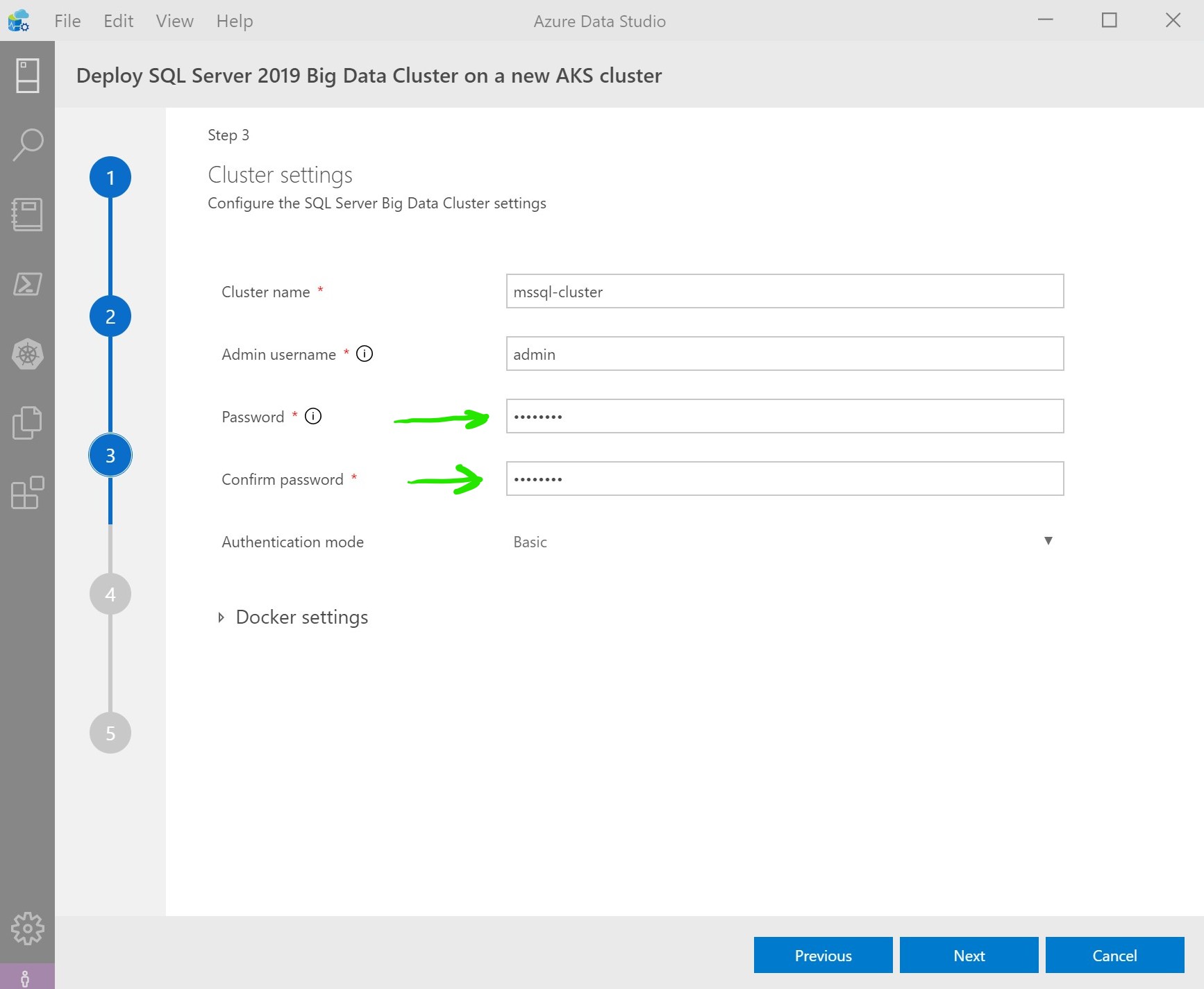

Next, or step 3, type in a password that will be used to log into the BDC Controller as shown in the image below:

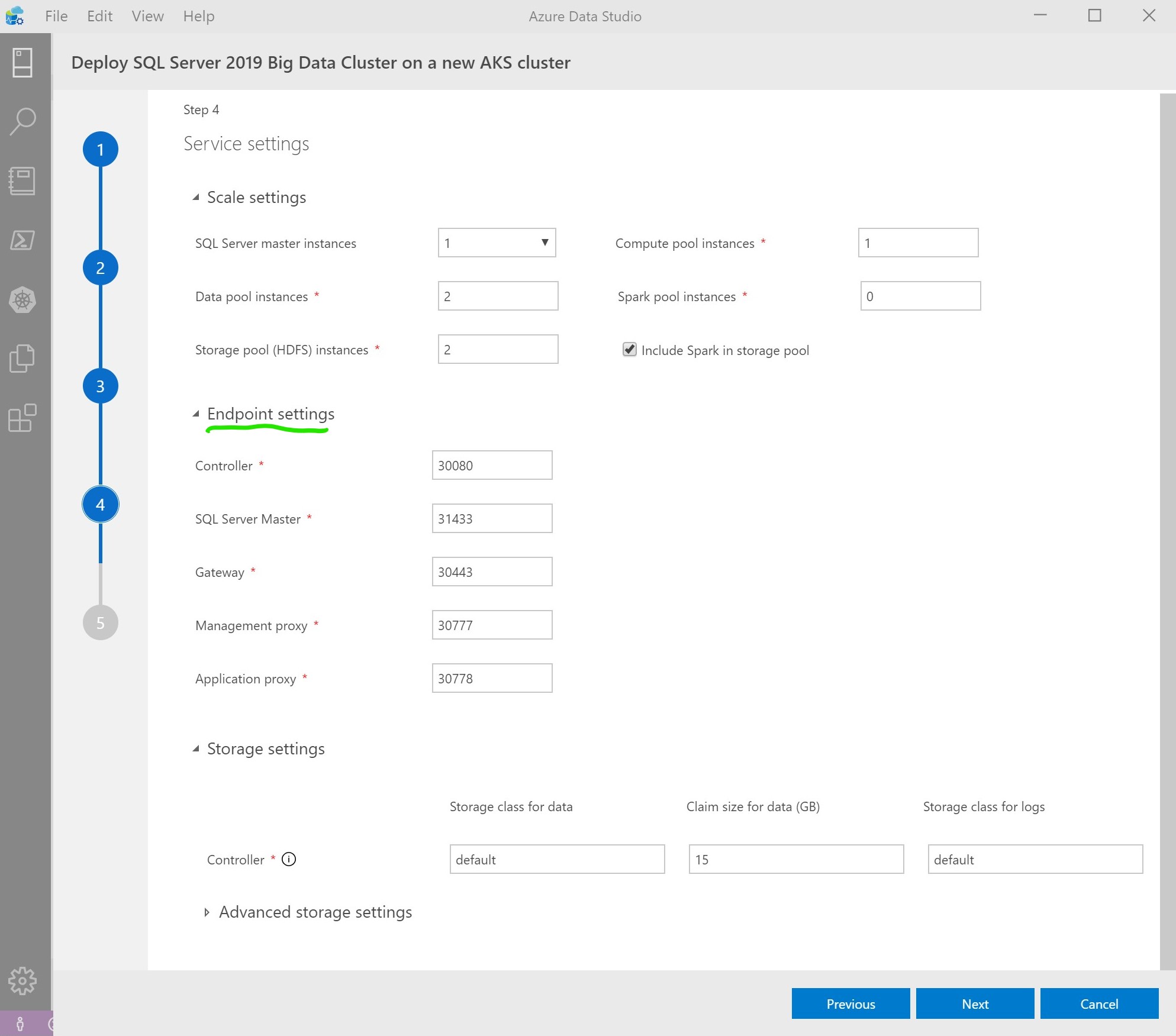

In step 4 you will see the “Service settings.” Leave everything as is and click Next. Note: you can see the endpoint ports by expanding Endpoints as show in the image below:

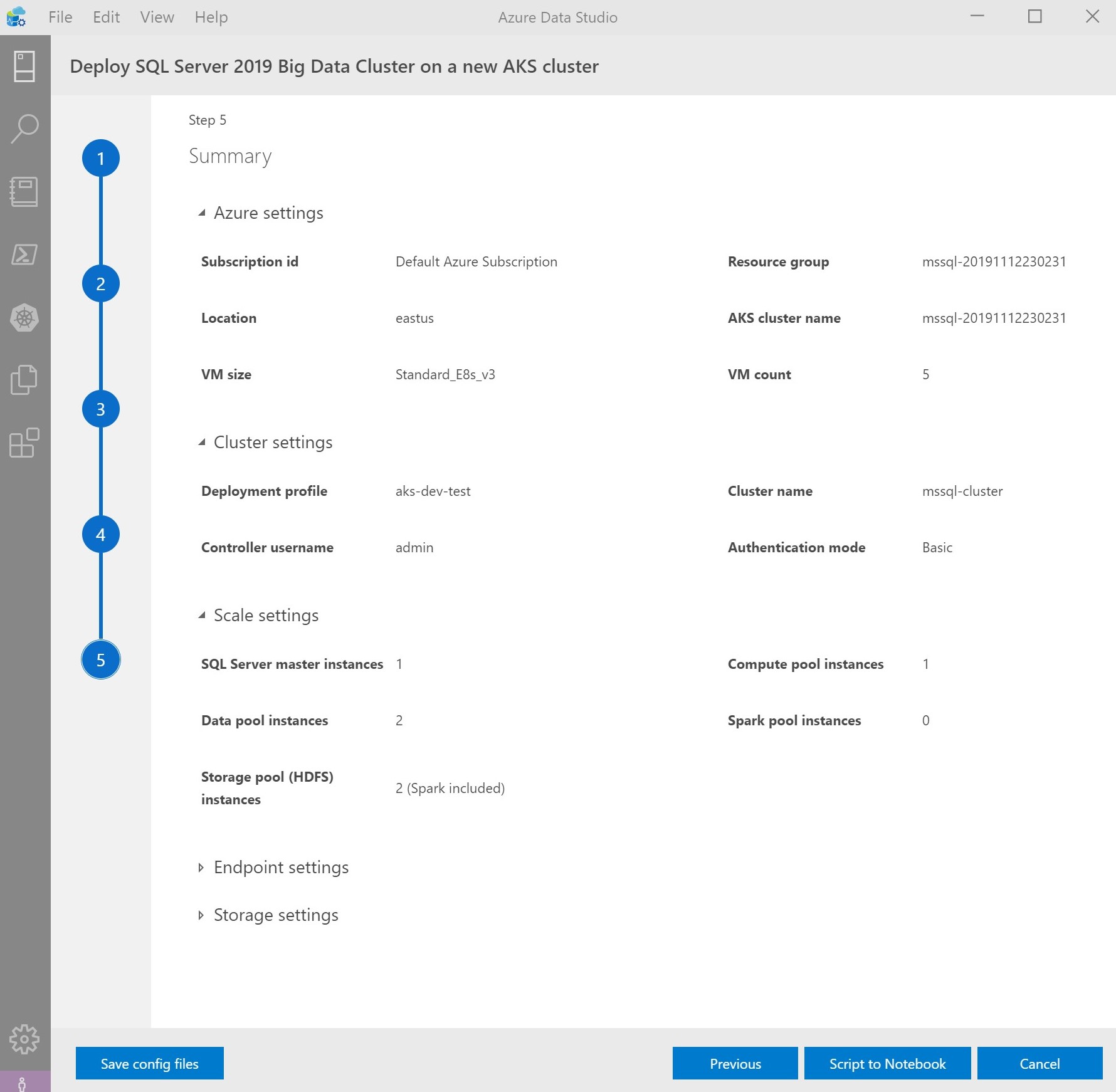

In final step you should see a summary of all your choices, as shown in the image below:

Go ahead and click “Script to Notebook” and after a few seconds you will see the notebook in ADS that we will use to deploy the AKS cluster and the Big Data Cluster.

If you want to watch me go through each cell in the newly created notebook with explanations, please watch the YouTube video above. Thanks!

Hi… Your blog is incredible. I am delighted with it. Thank you for sharing this wonderful post.

Great Job Mohamed

My pleasure, thank you!

Thanks Mohamed for your great effort and for this amazing article very helpful

You’re very welcome Mustafa!