This guide provides detailed instructions for installing dbatools on a machine without internet access. dbatools is a comprehensive suite of PowerShell tools designed to help SQL Server professionals efficiently manage SQL Server instances.

Continue reading “Install DBATOOLS offline”

Configuring SSL/TLS Encryption (Data in transit) for SQL Server

What Are SQL Server Big Data Clusters?

If you’d like to stay updated, without doing the heavy work, feel free to register for my newsletter. I will email out blog posts of my journey down the wonderful road of BDCs.

When I think of SQL Server 2019, I think of BIG DATA CLUSTERS. I remember first hearing the term and immediately thinking, “eh, what does that have to do with a Windows SQL DBA?” But the more I thought about it, the more I fell in love with it. And here’s why:

First: the entire market is shifting more and more towards the cloud. Whether you personally believe it or not, or like it or not, it is happening. In 3-5 years from now, majority of jobs on the market will require some level of cloud knowledge (Azure, AWS, etc.)

Second: over the past few version of SQL Server (2016+) it’s apparent that Microsoft is pushing SQL Server down the path of becoming “OS agnostic.” By that I mean it will no longer matter what the underlying OS is. Windows? Linux? Who cares? SQL Server will run on it all!

Third: Microsoft has a game plan and I want to play a part!

What Are Docker Containers?

Containers, Containers, Containers. It’s one of those things that apparently has been around “forever” but I just recently started to hear about it. As a Windows SQL DBA, things like Containers seems absolutely foreign to me.

How To Install SQL Server 2019 on Linux (CentOS)

Everyone is talking about Linux. SQL on Linux? Wow! I think it’s about time I start to dive into it. If you’re like me, then the only thing you know about Linux is that you know it’s an OS. That’s it. I have never installed/configured it let alone installing/configuring an app on it.

Then came along SQL Server 2017 (and now 2019), and the ability to run it on Linux. That was really eye-opening to me. Microsoft definitely loves Linux!

This post will go into how to install Linux (CentOS) and SQL Server 2019.

Continue reading “How To Install SQL Server 2019 on Linux (CentOS)”

applicationintent=readonly in Azure Data Studio

Recently, I had to use Azure Data Studio to access a application intent read only secondary replica. I had to use Azure Data Studio because I was using a Mac. I usually use SSMS on my Windows machines. If you want to connect with the “applicationintent=readonly” property via SQL Server Management Studio, you do so by typing it out in the “Additional Connection Parameters” as shown in the screenshot below:

Continue reading “applicationintent=readonly in Azure Data Studio”How to Enable Instant File Initialization SQL Server

There are few features in SQL Server that are disabled, or turned OFF, by default that, in my opinion, should be turned on/enabled. One of those features is something called “Instant File Initialization” (You can read about the other one here).

Continue reading “How to Enable Instant File Initialization SQL Server”

BEST SQL Server Logging, Recovery, & Transaction Log Course

There is a great comprehensive training video series by Paul Randal called, “SQL Server Logging, Recovery and the Transaction Log.” This is a Pluralsight 7.5 hour brain dump of everything Paul Randal knows about logging, recovery and transaction log.

Continue reading “BEST SQL Server Logging, Recovery, & Transaction Log Course”

How to Change Autogrowth in SQL Server 2012

It’s wise to set SQL Server file autogrowth to a set number rather than the default percent. That is because the percent setting can end up growing your file very large. The larger your file, the larger that percent will be. It’s better to set your file autogrowth to a set number and keep an eye on it for proper maintenance. Continue reading “How to Change Autogrowth in SQL Server 2012”

I Can’t Start SQL Server Browser – FIX

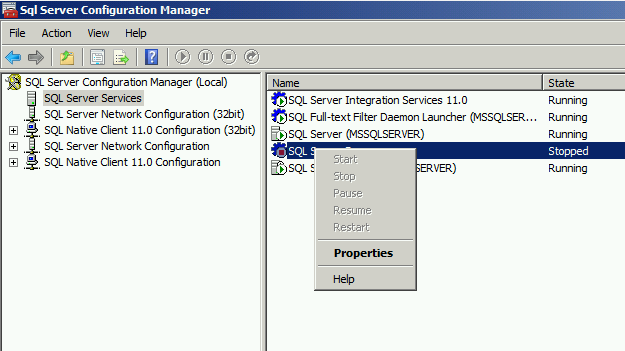

Recently a developer approached me at work and said, “I Can’t Start SQL Server Browser”

So, I logged into SQL Server Configuration Manager and saw that SQL Server Browser was stopped / off. When I right-clicked the SQL Server Browser to turn it on, I got this:

SQL Server DDL vs DML

For the longest time I’ve had a hard time remembering the difference between SQL Server DDL vs DML statements. I had a hard time remembering what statement fell under what category. Was INSERT, UPDATE, DELETE a DML command or DDL? What about CREATE / ALTER? It was all confusing to me. Continue reading “SQL Server DDL vs DML”

What’s Msg 208, Level 16, State 1, Line 1

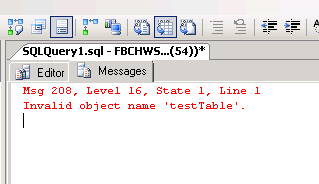

It’s usually something simple that’s overlooked that ends up causing the biggest troubleshooting headache. Let me explain. I was creating a test database called “testDB” with a test table called “testTable” (yes I know, I put a lot of thought in the naming of these objects) and when I tried to insert data into this new testTable I got the following error:

Msg 208, Level 16, State 1, Line 1 Invalid object name ‘testTable’.

What the hec? How can it be an invalid object? I just created it! Continue reading “What’s Msg 208, Level 16, State 1, Line 1”

What the hec? How can it be an invalid object? I just created it! Continue reading “What’s Msg 208, Level 16, State 1, Line 1”

Dirty Reads vs Phantom Reads in SQL Server

During a recent “interview” I was asked, “What two isolation levels in SQL Server will prevent phantom reads?”

I had never heard of “phantom reads” before but thought the person meant, “dirty reads.” So I replied, “READ COMMITTED and SNAPSHOT isolation levels.” Continue reading “Dirty Reads vs Phantom Reads in SQL Server”

How To Find All Tables, Columns, Data Types of SQL Server Database

Recently I had to find all the tables, columns, data types, etc. from a database. Below is a thorough script that brings back all the tables, attributes, data types, whether the column allows NULLS, whether it’s a Primary Key, or a Foreign Key (and if so, the referencing table). It’s extremely useful and easy to run. Continue reading “How To Find All Tables, Columns, Data Types of SQL Server Database”

How to Find Last Login Date of a SQL Server Login?

Feel free to watch the how-to video above or read below.

There are many options to find the last login date for a a SQL Server login. Even though there are awesome scripts like Adam Machanic’s “Who is Active” (download link here), sometimes you might find yourself without internet access, or perhaps at a client site that doesn’t have “Who is Active” installed and you forgot your thumb drive at home. :) Continue reading “How to Find Last Login Date of a SQL Server Login?”